Boring Personal STUFF

I’ve neglected this project badly. Aside from being a first-class procrastinator, I am also prone to getting overwhelmed by things. The latter is what happened here. I was at peak enthusiasm when the Kaggle Earthquake Challenge came along, coincidentally my computers all decided to fail at the same time. Not really a big deal, just needed to get things fixed, didn’t take very long. But it knocked the wind out of my sails. Just couldn’t face it at the time.

Fast forward. I’ve just had a couple of weeks knocked out by Covid, clear now. I do need to chase a contract for $$$s like yesterday, but I’m not quite up to working on someone else’s project just yet. I’ve probably got about 100 unfinished projects I could get back to, software and various lumps of electronics sitting on my shelves. But ($$$s aside), this one stands out a mile as being the most worthwhile. So now I’m ready to get back onto the horse/bicycle/crag.

The Proposition

I’m sure I’ve got something similar in this blog’s description, but the basic idea is to use machine learning to identify patterns of correlation between natural radio signals and seismic events and then attempt to make useful earthquake predictions from radio precursors. I have no illusions about this. I reckon, in the best case, very approximate predictions for a very small proportion of events is possible. It won’t be easy and it will take a lot of time. But given how cataclysmic such events can be, it’s worth a try.

The Plan

There are a handful of separate components needed, at the core: data acquisition, a model, a notification system. I think a reasonable 1000 ft view is that of a control system – inputs, processing, outputs, (validation/)feedback. All of which will need creating and tuning.

I really like fiddling with electronics hardware, have put in many hours work looking at the sensor/data acquisition parts of the system. Very poor use of my very limited cognitive resources. After a long break from this, I can shout at myself :

The novel part of this system is around the model.

I think it makes sense to narrow the geographic scope as much as possible, and ‘near me’ is an obvious choice. I live in northern Italy.

High-quality seismic data is available from the National Institute of Geophysics and Volcanology, INGV. Conveniently, the guy who literally wrote the book on natural radio signals has monitoring equipment, streaming live from up near Turin (VLF.it). Also conveniently, in an unfortunate sense, this is an active seismic region (it was the devastating quake of 2009 around L’Aquila that got me wondering about this…not to mention the one in 1920 that reduced Villa Collamandina to rubble, a village I can see from my balcony).

But I have no idea what the model should look like yet. Early on when I was thinking about this, I had a little lightbulb moment. The convolutional networks have been shown to be really efficient at pulling out salient feature from images. A human-friendly way of representing natural radio signals is as a spectrogram. Those should be receptive to reduction by off-the-shelf shape recognition algorithms. Tricky bit is the long-term temporal axis of radio & seismic data. LSTMs probably won’t hack it, but by now there’s probably an appropriate successor. (Ideally the training/application phases will be concurrent, which is a rabbit hole in my near future).

There is an advantage to putting a project on hold for a while, however inadvertent. The software equivalent of Sun Tzu’s “If you wait by the river long enough, the bodies of your enemies will float by.”. Someone else will figure out the algorithms you need.

If it really needs stating, I’m way behind the curve of developments in Deep Learning. But what I think I’ve gathered from the little experiments I’ve tried is that I can play at very small scale on my mediocre home computer (no GPU), acquire/pre-process the data, perhaps get a proof-of-concept (toy!) model topology together. Scale up onto a Cloud service.

Necessary for that is creating an environment in which to code…remembering how to code… Ok, I’m looking at Python, Tensorflow/Keras and/or PyTorch.

So before I consider even a toy version of anything earthquake-related, I need to gently paddle into the water. Last night I had a Brilliant Idea!

Zoltán who?

The prompt for this was probably Flight of the Bumblebee on the Theremin . (She did two takes – one for the sounds, one for the bee. I initially thought she’d ‘cheated’, using a MIDI theremin for note separation – nope. Just put it through tremolo, got her movements against it perfect).

Ok, so Close Encounters of the Third Kind. And/or, Sound of Music. Do, re, me…

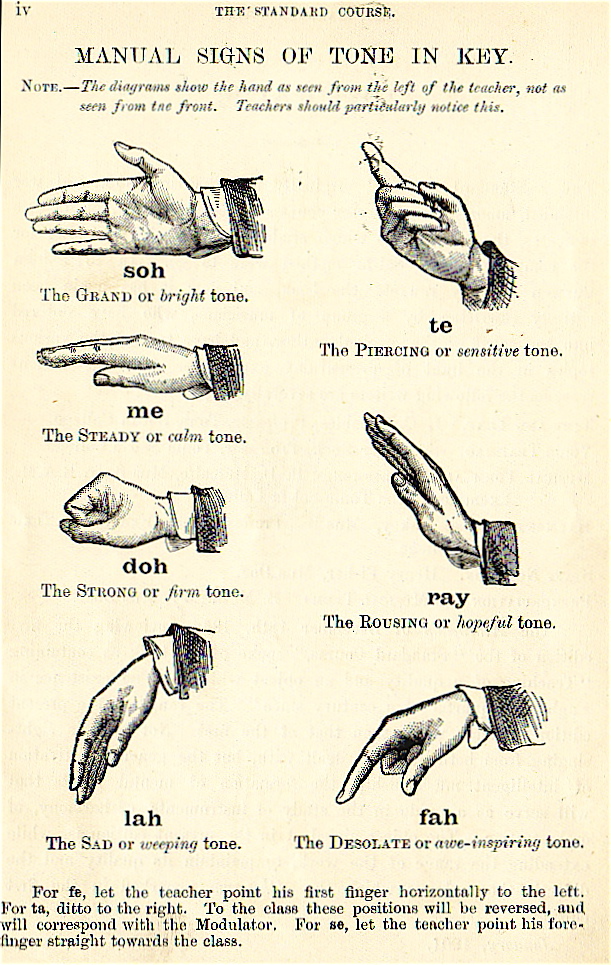

With the Sol-Fa Notation (which seems better known that C, D, E… in Italy, btw), Zoltán Kodály, a 20th music teacher built on Corwen et al’s work to have kids do hand signals corresponding to their role/feeling in the scale.

Well, that would be a cool way of playing an instrument.

Naturally I googled it. Naturally, it’d been done by 2016 : MiLa: An Audiovisual Instrument for Learning the Curwen Hand Signs.

But naaaah! I don’t have access to the paper, but in the abstract it says they used ‘a Leap motion sensor‘. Apparently those are spatial tracking things like the IR etc. used with VR kit.

Why not just use a camera?

Grab a frame from webcam, convert it to 28×28 pixel greyscale, associate with one of the 7 labels. Use one of the models known to work well with the MNIST handwritten digit benchmark dataset. Play the Five Tones.

So I’m now in the process of building OpenCV-Python. Predictably my environment was a mess, Anaconda doesn’t seem to play well with QT/Wayland/Ubuntu.

All being well I can get a script together to tell me what hand shape to hold, a few k images within reasonable time. Find model, train, add bleeps.

I’m talking long before I get onto Tensorflow or whatever. Hey ho. Could wait forever for an environment configuration to float by (wasn’t that the whole point of VMs, Docker etc? But when you need one, float on by…).

Should be straightforward once the environment is set up. Which is the purpose of the exercise.