Skip to filter circuits:

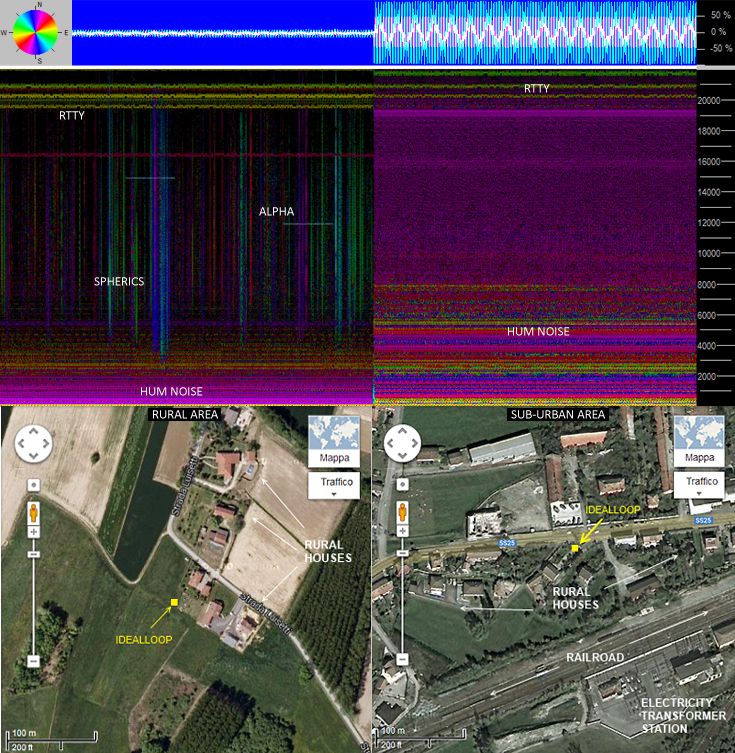

I’m in a relatively noisy environment when it comes to mains hum having overhead power lines nearby. So any ELF/VLF receiver I put together will have to deal with this.

To get an idea of what kind of filtering I might need I simply put a jack plug with bare terminals into the mic in of a laptop, held on to it to make myself an antenna, and recorded for half a minute using Audacity. Here’s a snippet of the resulting waveform:

Yup, that is one well-distorted sine wave. Reminds me of the waveform going to bulbs from triac-based dimmers, though haven’t any in the house.

More usefully, here’s the spectrum plot (Audacity rocks!) :

There’s a clear peak at 50Hz. Next highest is at 150Hz, the 3rd harmonic. It’s around 12dB down, which (assuming it’s the voltage ratio being shown, ie. 20*log10(V2/V1)) is 1/4 of the voltage. Next comes 100Hz, the 2nd harmonic, about 30dB down, about 1/32 of the voltage (from ratio = 10^(dB/20)).

(I’m in Italy where like most of the world the mains AC frequency is 50Hz. In the Americas it tends to be 60Hz).

So I reckon I definitely need to cut the 50Hz as much as possible, probably 150Hz too.

Digital filters are relatively straightforward to implement in software, but here there’s a snag. The incoming signal is analog, so will need to go through an ADC. The ELF/VLF signal of interest is likely to be of very small amplitude compared to the mains hum. So using say a 16-bit ADC, capturing the whole signal at maximum resolution, it’s conceivable that the interesting signal only occupies a couple of bits, or maybe even be below a single bit. So really the filtering has to happen in the analog chain, before the ADC. Experimentation will be needed, but I imagine a setup like this will be required:

There are a few options for the kind of receiver to use, essentially coil-based (magnetic component of the radio wave) or antenna (electrical component), the nature of the early circuitry and pre-amp will be dependent on this. But the main role of the pre-amp is to boost the signal well above the noise floor of subsequent stages (using low-noise components). At a first guess, something in the region of x10 – x100 should be adequate.

Next comes the filter(s). Now the fortunate thing here is that the ELF/VLF frequency ranges I’m considering, say 5Hz-20kHz are pretty much the audio frequency ranges and are thus within the scope of standard audio components. Well, 5Hz is below the nominal 20Hz-20kHz figures given for audio, but the key thing is that at the high end, it’s nowhere near anything requiring exotic components. Even the humble 741 op-amp (dating from 1968) has a unity-gain bandwidth around 1MHz. For the TL071 family, a reasonable low-cost default these days it’s 3MHz.

One option for filtering the mains hum out would be to use a high pass filter and only look at the higher end of VLF (conversely, a low pass filter and go for ELF). But notch (band stop) filters can be pretty straightforward, so it should be productive to target just the 50Hz (and maybe 150Hz).

(A more exotic approach would be to use something like an analog bucket brigade line device as used in many analog phaser & flanger audio effects boxes, with its delay fixed at the period of the fundamental 50Hz. Mixing this inverted with the input signal non-inverted will cause cancellation at the fundamental and all it’s harmonics, ie. a comb filter. But not only does that seem overkill here, it will in effect degrade the signal of interest).

There are a few alternatives for notch filters. While they can be built from passive components, there are significant benefits to using active components, especially in terms of controlling the parameters. For these reasons and circuit simplicity, op-amps are a good choice over discrete components.

There are three leading candidate circuit topologies, as follows.

Active Twin-T Notch

This classic passive circuit is the starting point.

The notch frequency is given by fc = 1 / (2 pi R C)

This assumes a low impedance source for Vin and a high impedance connected to Vo, which can easily be achieved using op-amp buffers. One drawback of this setup is that its selectivity, the slope of the sides of the notch, is fairly poor. This can be significantly increased by using op-amps to bootstrap the T :

The notch frequency is determined as for the grounded T above, only this time the Q/selectivity can be varied, according to the values of R4 and R5.

But a troublesome problem remains: all 6 components on which the frequency depends have to have precise values to place the notch where required. Any variation is likely to lead to a sloppy notch, of low Q. While 1% tolerance resistors are the norm these days, capacitors tend to have tolerances more like 5 or 10%. One option is to use reasonably well-matched capacitors (from the same batch) and vary the resistors. But this still leave 3 variables, with some level of interdependence.

(I’ve actually got this one on a breadboard at the moment. For a one-off circuit it isn’t unreasonable to use resistors a little below the calculated values in series with pots, and once fine tuned replaced with fixed values).

Bainter Notch

This is quite a nifty circuit (and new to me). The main benefits are described in the title of Bainter’s own description : Active filter has stable notch, and response can be regulated. Notch depth depends on gain and not (passive) component values.

I’ve yet to play with this one, but it certainly shows promise. A downside is that the component values calculation is rather unwieldy. Another ref. is this TI doc: Bandstop filters and the Bainter topology.

State Variable Filter

The State Variable topology is very versatile, offering high- and low-pass outputs as well as bandpass. By mixing the high- and low-pass outputs or the input with the bandpass output, a notch can be achieved. Crucially the gain, center frequency, and Q may be adjusted separately. A bonus compared to the Twin-T is that the frequency is determined by just 2 resistors and 2 capacitors. A few days ago I stumbled on a tweaked version of the standard topology which offers a few advantages. I won’t go into details here, it’s all described in the source of this diagram – Three-op-amp state-variable filter perfects the notch.

Once I’ve played with the Twin-T a bit more, I’ll have a go with this one. I have a good feeling about it.